In the era of booming cloud computing, artificial intelligence (AI), and high-performance computing (HPC), data center processors face unprecedented challenges. On one hand, cloud-native applications demand higher core density and energy efficiency to support massive concurrent workloads. On the other hand, AI training and inference tasks require peak single-core performance and acceleration capabilities. Intel, a long-standing leader in server CPUs, is addressing these demands with its sixth-generation Xeon processor series (Xeon 6). Launched progressively in 2024, the series includes the performance-core (P-core) variant Granite Rapids (September 2024) and the efficiency-core (E-core) variant Sierra Forest (June 2024), marking a shift from a “one-size-fits-all” universal design to targeted architectures.This transformation is not isolated but is supported by deep collaborations with ecosystem partners.

For instance, Intel’s 15-year partnership with Alibaba Cloud has driven optimizations in cloud infrastructure and achieved significant results in AI inference and risk control. This article objectively analyzes Xeon 6’s core technologies, performance advantages, real-world applications, and its positioning in the competitive landscape, based on public benchmarks and industry reports.

Intel’s Strategic Shift: From Universal to Specialized

Historically, Intel Xeon processors adopted a single architecture to balance all workloads, but this struggled to address the extremes of cloud-native (emphasizing density and efficiency) and AI/HPC (emphasizing performance) workloads. The Xeon 6 series introduces a dual-track strategy: P-core for high-performance scenarios and E-core for cloud efficiency. This split allows Intel to scale flexibly based on market needs, avoiding the limitations of a one-size-fits-all approach.

-

P-core (Granite Rapids): Up to 128 cores, optimized for AI inference and HPC simulations. Benchmarks show its multi-threaded performance is ~1.38x better than the previous Emerald Rapids (dual-socket configuration), outperforming AMD EPYC 9754 in GROMACS HPC tasks.

-

E-core (Sierra Forest): Up to 288 cores (expanding in 2025), tailored for cloud-native optimization. Phoronix tests indicate ~19% better power efficiency than AMD Bergamo EPYC 9754, ideal for multi-threaded workloads like web services.

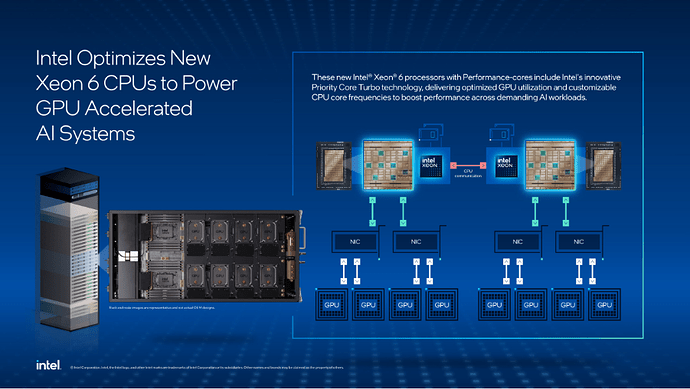

This shift is bolstered by Intel’s collaboration with NVIDIA (enhancing the AI ecosystem) and long-term synergy with Alibaba Cloud, which integrates Xeon into its Apsara (Feitian) operating system for resource pooling.

Xeon 6 P-core: Four Key Technical Advantages

The Xeon 6 P-core leverages a modular chiplet design, advanced process nodes, and accelerators to improve yield and performance. The table below summarizes its key advantages, based on Intel’s official specifications and independent benchmarks.

| Aspect | Key Technologies & Advantages | Details & Benefits |

|---|---|---|

| Packaging & Process | EMIB (Embedded Multi-die Interconnect Bridge) for chiplet manufacturing | Compute chip (Intel 3 process, EUV lithography) and I/O chip (Intel 7) separated, boosting yield by 20-30%. Up to 5 chiplets integrated, exceeding monolithic die size, reducing costs and enabling 128-core scaling. |

| Cores & Cache | Redwood Cove cores + massive L3 cache | Up to 128 cores, 2MB L2 cache per core, total L3 cache of 504MB (4.5x over prior gen). SPEC CPU 2017 tests show 1.2x multi-core performance, ideal for AI matrix operations. |

| I/O Interface | 12-channel DDR5 + CXL 2.0 support | Memory bandwidth increased 70% over 8-channel DDR5 (up to 8800MT/s MRDIMM). CXL 2.0 enables memory pooling, supporting >1PB capacity, enhancing data center flexibility by 15-20%. |

| Accelerator Design | Integrated AMX (Advanced Matrix Extensions) and QAT (Quick Assist Technology) | AMX supports FP16/BF16 matrix operations, boosting AI inference 2-3x (e.g., BERT-Large). QAT handles compression/encryption, offloading CPU cores by up to 30%, reducing power consumption. |

These innovations make Xeon 6 more efficient in manufacturing: chiplet designs reduce monolithic die defects, while EMIB provides >1TB/s bandwidth for low-latency interconnects.

Alibaba Cloud’s Implementation: CIPU and Apsara Integration

As a key Intel partner, Alibaba Cloud leverages Xeon 6 to build high-efficiency cloud infrastructure. Its custom CIPU (Cloud Infrastructure Processing Unit) and Apsara distributed operating system handle virtualization and networking tasks, allowing Xeon cores to focus on compute.

-

CIPU’s Role: CIPU 2.0 decouples I/O and compute, supporting 100G networking and eRDMA acceleration. In Alibaba’s 9th-gen ECS instances, paired with Xeon 6, performance improves by 67%, suitable for high-concurrency tasks like video transcoding.

-

Apsara Scheduling: Apsara manages resource pools, supporting CXL memory sharing for dynamic allocation. Alibaba’s Panjiu super-node servers, integrating CIPU and Xeon 6, deliver Pb/s bandwidth with <100ns latency.

This integration is deployed across global data centers, supporting Alibaba Cloud’s AI infrastructure expansion, including collaborations with NVIDIA for physical AI.

AI Inference Applications: Cost-Effectiveness and Case Studies

While GPUs dominate AI training, CPUs excel in inference due to cost advantages. Xeon 6’s AMX accelerators make CPU inference performance competitive with GPUs, particularly in finance, vision, and recommendation systems.

-

Ant Group Risk Control Case: Ant Group uses Xeon 6’s AMX to achieve 2.3x performance gains in risk control inference, with 72% cost reduction. Its privacy-enhanced AI platform, combined with Intel TDX, enables secure multi-party computation for real-time fraud detection.

-

Benchmark Comparison: On Llama-2 70B, Xeon 6 inference latency is <86ms, 2x better than the prior generation. Phoronix tests show 7x tokens/s throughput with AMX enabled (batch size 6).

Compared to alternatives, Xeon 6’s integrated accelerators reduce TCO by 20-30% without requiring additional hardware.

Technology Democratization: From Data Centers to Edge

Xeon 6’s technologies extend beyond servers: AMX and QAT accelerators are trickling down to edge devices, driving NPU adoption in phones and AI-powered image recognition. These advancements, rooted in data center challenges (e.g., memory bottlenecks), are extended to IoT and 5G via CXL pooling. Intel and Alibaba Cloud’s edge computing platforms already support real-time AI, with broader consumer applications expected by 2026.

Competitive Landscape and Future Outlook

Xeon 6 outperforms its predecessor in benchmarks but faces competition from AMD EPYC Turin (up to 192 Zen 5c cores) and Arm Graviton. It leads by ~19% in cloud efficiency but trails by 5-10% in HPC workloads. Intel’s market share is projected to stabilize above 60% in 2025, driven by ecosystem strength (e.g., Alibaba’s 15-year partnership).Looking ahead, Xeon 7 (Diamond Rapids, 2026) will expand chiplet designs and CXL 3.0, supporting 192 cores and multi-level switching. Overall, Xeon 6 marks a critical step in Intel’s competitive resurgence, though continued software ecosystem optimization is needed for widespread adoption.