The rapid expansion of Artificial Intelligence (AI) is creating significant investment opportunities, but the path can seem complex. A practical entry point for understanding this ecosystem is to examine its physical backbone: data centers and the servers within them.

A data center is a large facility housing vast arrays of computer hardware, primarily servers, that provide remote computing services—often called “cloud” services. This isn’t a new concept; services like email and cloud storage have long relied on them. The AI boom has led to specialized “AI Data Centers” focused intensely on computational power for training and running AI models, rather than just data storage.

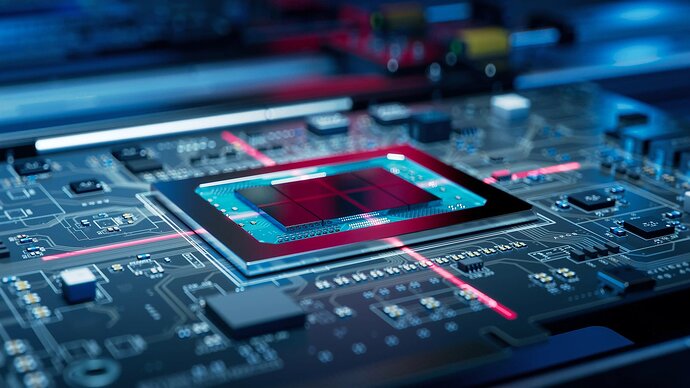

At the heart of every data center are servers. Think of a single server as a powerful computer. The current trend involves connecting hundreds or thousands of these servers together in a process called “scale-out,” creating immense, interconnected computing clusters. This drive for unprecedented processing power creates heat, making advanced cooling systems—now shifting from air to liquid cooling—a critical and investable component of modern data centers.

Building and operating these facilities involves a massive supply chain: construction, power supply, cooling systems, and the IT hardware itself. The IT hardware within a server includes the processor (like GPUs for AI), high-bandwidth memory (HBM) for fast data access, and other components like advanced circuit boards and power supplies. While assembling a server requires these parts, the real technological challenge often lies in integration and reliability under constant, high-stress operation—for instance, ensuring liquid cooling connectors never leak and destroy millions of dollars worth of equipment.

This global infrastructure build-out, with projections of spending reaching hundreds of billions of dollars, represents a major opportunity for hardware suppliers. Regions with strong manufacturing bases, like Taiwan, are positioned as key players in this supply chain, providing everything from the most advanced semiconductors to cooling modules and power systems.

Meanwhile, other large markets are developing their own competitive edges. For example, regions with lower electricity costs can significantly reduce the operational expenses of running power-hungry data centers. Furthermore, developing a domestic ecosystem of AI chips and server manufacturers can create a self-sustaining industrial loop, even if individual components aren’t the globally most advanced.

For investors, especially those looking at markets strong in hardware manufacturing, the logic is clear: follow the infrastructure. Understanding the components critical to building AI data centers and servers—from chips and memory to cooling and power—can reveal the companies likely to benefit from this multi-year expansion. The key is identifying which technologies are becoming essential in this new AI-driven hardware paradigm and which suppliers are mastering them.